Please join our new IEEE Collabratec Technology and Society Community!

Please join our new IEEE Collabratec Technology and Society Community!

What role does and can AI play in us being able to enjoy security in our places and spaces? Perhaps we could design technology-enabled spaces for the purpose of strengthening the community and empowering community action.

Social media companies have intentionally created platforms that actively spread disinformation. What can we do to protect our society against disinformation? A good place to start would be limiting how large and powerful these social media platforms can get.

Young people’s unique understandings and perspectives are often not considered in debates and discussions around privacy and security. This article outlines a youth-centric notion of digital privacy and guiding principles around privacy developed by young people from Antigua and Barbuda, Australia, Ghana, and Slovenia.

The 2022 IEEE International Symposium on Digital Privacy and Social Media (ISDPSM 2022) with the theme “Applying Engineering Solutions to a Complex Set of Issues” will take place in Silicon Valley, San Jose, California, USA on August 1, 2022 at San Jose Marriott Hotel.

Having a philosophical road map to what is required, might help those with skills to design intelligent machines that will enable and indeed promote human flourishing.

By failing to attend to the source, disinformation can be stored along with information, making it difficult to distinguish the good penny from the bad penny.

Social media have been seen to accelerate the spread of negative content such as disinformation and hate speech, often unleashing a reckless herd mentality within networks, further aggravated by malicious entities using bots for amplification. So far, the response to this emerging global crisis has centered around social media platform companies making reactive moves

Katina Michael of the Australian Privacy Foundation speaks with Gemma Veness of ABC24hour (June 2, 2019), about the implications of… Read More

Social media (SM) usage is increasing across the globe. Of the 7.6 billion people populating earth, 4 billion are believed to be Internet users. Over 3 billion are SM users, representing over 40% global penetration.

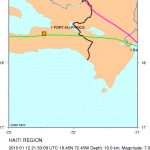

Information generated on social media sites such as Twitter, Facebook, Flickr, and Instagram are fast becoming powerful and ubiquitous new sources of time-critical data needed to aid decision making during extreme weather events and emergency situations.

Zuckerberg’s acknowledgement of the need to address issues related to election influence, “fake news,” and social media addiction is a significant step.

The Wall Street Journal reports that “Facebook Concedes to Effects on Health.” The social media health impact acknowledged is related… Read More

Contrarian computer scientist Jaron Lanier writes, “If you observe humans using computer programs that are designated to be “smart,” you… Read More

Social media, driven by the explosive uptake of mobile computing, has caused a systematic shift in the structure of personal… Read More

Digital Militarism: Israel’s Occupation in the Social Media Age. By Adi Kuntsman and Rebecca L. Stein. Stanford University Press, 2015,$21.95… Read More

It should be noted that an early, if not first, instance of an online physical attack on a person has… Read More

The issue of how video games or movies affect behavior is a recurrent topic in academic and public discourse.